These are the subtle types of errors that are much more likely to cause problems than when it tells someone to put glue in their pizza.

How else are the toppings supposed to stay in place?

Yes its totally ok to reuse fish tank tubing for grammy’s oxygen mask

Obviously you need hot glue for pizza, not the regular stuff.

Wait… why can’t we put glue on pizza anymore?

because the damn liberals canceled glue on pizza!

I wish we could really press the main point here: Google is willfully foisting their LLM on the public, and presenting it as a useful tool. It is not, which makes them guilty of neglicence and fraud.

Pichai needs to end up in jail and Google broken up into at least ten companies.

And this technology is what our executive overlords want to replace human workers with, just so they can raise their own compensation and pay the remaining workers even less

So much this. The whole point is to annihilate entire sectors of decent paying jobs. That’s why “AI” is garnering all this investment. Exactly like Theranos. Doesn’t matter if their product worked, or made any goddamned sense at all really. Just the very idea of nuking shitloads of salaries is enough to get the investor class to dump billions on the slightest chance of success.

Exactly like Theranos

Is it though? This one is an idea that can literally destroy the economic system. Seems different to ignore that detail.

Current gen AI can’t come close to destroying the economy. It’s the most overhyped technology I’ve ever seen in my life.

I am starting to think google put this up on purpose to destroy people’s opinion on AI. They are so much behind Open AI that they would benefit from it.

I doubt there’s any sort of 4D chess going on, instead of the whole thing being brought about by short-sighted executives who feel like they have to do something to show that they’re still in the game exactly because they’re so much behind "Open"AI

This is the kind of shit that makes Idiocracy the most weirdly prophetic movie I’ve ever seen.

Ignoring the blatant eugenics of the very first scene, I’d rather live in the idiocracy world because at least the president with all of his machismo and grandstanding was still humble enough to put the smartest guy in the room in charge of actually getting plants to grow.

My take away from that was the poorly educated had more kids.

Remember when Google used to give good search results?

Like a decade ago?

I wish infoseek was still around

I wonder if all these companies rolling out AI before it’s ready will have a widespread impact on how people perceive AI. If you learn early on that AI answers can’t be trusted will people be less likely to use it, even if it improves to a useful point?

will have a widespread impact on how people perceive AI

Here’s hoping.

Personally, that’s exactly what’s happening to me. I’ve seen enough that AI can’t be trusted to give a correct answer, so I don’t use it for anything important. It’s a novelty like Siri and Google Assistant were when they first came out (and honestly still are) where the best use for them is to get them to tell a joke or give you very narrow trivia information.

There must be a lot of people who are thinking the same. AI currently feels unhelpful and wrong, we’ll see if it just becomes another passing fad.

To be fair, you should fact check everything you read on the internet, no matter the source (though I admit that’s getting more difficult in this era of shitty search engines). AI can be a very powerful knowledge-acquiring tool if you take everything it tells you with a grain of salt, just like with everything else.

This is one of the reasons why I only use AI implementations that cite their sources (edit: not Google’s), cause you can just check the source it used and see for yourself how much is accurate, and how much is hallucinated bullshit. Hell, I’ve had AI cite an AI generated webpage as its source on far too many occasions.

Going back to what I said at the start, have you ever read an article or watched a video on a subject you’re knowledgeable about, just for fun to count the number of inaccuracies in the content? Real eye-opening shit. Even before the age of AI language models, misinformation was everywhere online.

Sadly there’s really no other search engine with a database as big as Google. We goofed by heavily relying on Google.

Kagi is pretty awesome. I never directly use Google search on any of my devices anymore, been on Kagi for going on a year.

I just started the Kagi trial this morning, so far I’m impressed how accurate and fast it is. Do you find 300 searches is enough or do you pay for unlimited?

Interesting… sadly paid service.

I use perplexity, I just have to get into the habit of not going straight to google for my searches.

I do think it’s worth the money however, especially since it allows you to cutomize your search results by white-/blacklisting sites and making certain sites rank higher or lower based on your direct feedback. Plus, I like their approach to openness and considerations on how to improve searching without bogging down the standard search.

Not yet! But you can make a difference to that… https://yacy.net/

It blows my mind that these companies think AI is good as an informative resource. The whole point of generative text AIs is the make things up based on its training data. It doesn’t learn, it generates. It’s all made up, yet they want to slap it on a search engine like it provides factual information.

It’s like the difference between being given a grocery list from your mum and trying to remember what your mum usually sends you to the store for.

… Or calling your aunt and having her yell things at you that she thinks might be on your Mum’s shopping list.

That could at least be somewhat useful… It’s more like grabbing some random stranger and asking what their aunt thinks might be on your mum’s shopping list.

… but only one word at a time. So you end up with:

- Bread

- Cheese

- Cow eggs

- Chicken milk

It really depends on the type of information that you are looking for. Anyone who understands how LLMs work, will understand when they’ll get a good overview.

I usually see the results as quick summaries from an untrusted source. Even if they aren’t exact, they can help me get perspective. Then I know what information to verify if something relevant was pointed out in the summary.

Today I searched something like “Are owls endangered?”. I knew I was about to get a great overview because it’s a simple question. After getting the summary, I just went into some pages and confirmed what the summary said. The summary helped me know what to look for even if I didn’t trust it.

It has improved my search experience… But I do understand that people would prefer if it was 100% accurate because it is a search engine. If you refuse to tolerate innacurate results or you feel your search experience is worse, you can just disable it. Nobody is forcing you to keep it.

you can just disable it

This is not actually true. Google re-enables it and does not have an account setting to disable AI results. There is a URL flag that can do this, but it’s not documented and requires a browser plugin to do it automatically.

I think the issue is that most people aren’t that bright and will not verify information like you or me.

They already believe every facebook post or ragebait article. This will sadly only feed their ignorance and solidify their false knowledge of things.

The same people who didn’t understand that Google uses a SEO algorithm to promote sites regardless of the accuracy of their content, so they would trust the first page.

If people don’t understand the tools they are using and don’t double check the information from single sources, I think it’s kinda on them. I have a dietician friend, and I usually get back to him after doing my “Google research” for my diets… so much misinformation, even without an AI overview. Search engines are just best effort sources of information. Anyone using Google for anything of actual importance is using the wrong tool, it isn’t a scholar or research search engine.

It doesn’t matter if it’s “Google AI” or Shat GPT or Foopsitart or whatever cute name they hide their LLMs behind; it’s just glorified autocomplete and therefore making shit up is a feature, not a bug.

Making shit up IS a feature of LLMs. It’s crazy to use it as search engine. Now they’ll try to stop it from hallucinating to make it a better search engine and kill the one thing it’s good at …

Chatgpt was in much higher quality a year ago than it is now.

It could be very accurate. Now it’s hallucinating the whole time.

I was thinking the same thing. LLMs have suddenly got much worse. They’ve lost the plot lmao

That’s because of the concerted effort to sabotage LLMs by poisoning their data.

I’m not sure thats definitely true… my sense is that the AI money/arms race has made them push out new/more as fast as possible so they can be the first and get literally billions of investment capitol

Maybe. I’m sure there’s more than one reason. But the negativity people have for AI is really toxic.

Being critical of something is not “toxic”.

is it?

nearly everyone I speak to about it (other than one friend I have who’s pretty far on the spectrum) concur that no one asked for this. few people want any of it, its consuming vast amounts of energy, is being shoehorned into programs like skype and adobe reader where no one wants it, is very, very soon to become manditory in OS’s like windows, iOS and Android while it threatens election integrity already (mosdt notibly India) and is being used to harass individuals with deepfake porn etc.

the ethics board at openAI got essentially got dispelled and replaced by people interested only in the fastest expansion and rollout possible to beat the competition and maximize their capitol gains…

…also AI “art”, which is essentially taking everything a human has ever made, shredding it into confetti and reconsstructing it in the shape of something resembling the prompt is starting to flood Image search with its grotesque human-mimicing outputs like things with melting, split pupils and 7 fingers…

you’re saying people should be positive about all this?

You’re cherry picking the negative points only, just to lure me into an argument. Like all tech, there’s definitely good and bad. Also, the fact that you’re implying you need to be “pretty far on the spectrum” to think this is good is kinda troubling.

So uhh… why aren’t companies suing the shit out of Google?

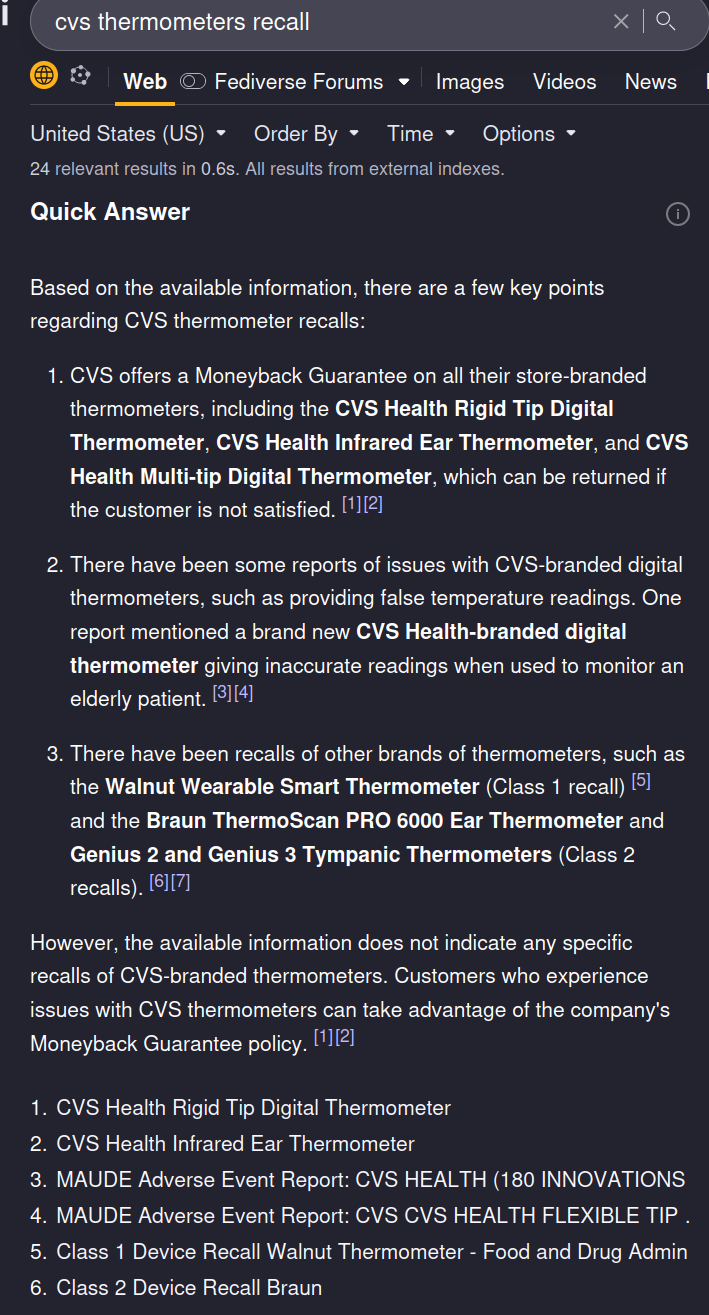

Could this be grounds for CVS to sue Google? Seems like this could harm business if people think CVS products are less trustworthy. And Google probably can’t find behind section 230 since this is content they are generating but IANAL.

Iirc cases where the central complaint is AI, ML, or other black box technology, the company in question was never held responsible because “We don’t know how it works”. The AI surge we’re seeing now is likely a consequence of those decisions and the crypto crash.

I’d love CVS try to push a lawsuit though.

“We don’t know how it works but released it anyway” is a perfectly good reason to be sued when you release a product that causes harm.

In Canada there was a company using an LLM chatbot who had to uphold a claim the bot had made to one of their customers. So there’s precedence for forcing companies to take responsibility for what their LLMs says (at least if they’re presenting it as trustworthy and representative)

This was with regards to Air Canada and its LLM that hallucinated a refund policy, which the company argued they did not have to honour because it wasn’t their actual policy and the bot had invented it out of nothing.

An important side note is that one of the cited reasons that the Court ruled in favour of the customer is because the company did not disclose that the LLM wasn’t the final say in its policy, and that a customer should confirm with a representative before acting upon the information. This meaning that the the legal argument wasn’t “the LLM is responsible” but rather “the customer should be informed that the information may not be accurate”.

I point this out because I’m not so sure CVS would have a clear cut case based on the Air Canada ruling, because I’d be surprised if Google didn’t have some legalese somewhere stating that they aren’t liable for what the LLM says.

But those end up being the same in practice. If you have to put up a disclaimer that the info might be wrong, then who would use it? I can get the wrong answer or unverified heresay anywhere. The whole point of contacting the company is to get the right answer; or at least one the company is forced to stick to.

This isn’t just minor AI growing pains, this is a fundamental problem with the technology that causes it to essentially be useless for the use case of “answering questions”.

They can slap as many disclaimers as they want on this shit; but if it just hallucinates policies and incorrect answers it will just end up being one more thing people hammer 0 to skip past or scroll past to talk to a human or find the right answer.

I’m using &udm=14 for now…

Why go out of your way instead of just using a proper search engine? Google has been getting worse and worse for the past 4 or 5 years

Can you tell folks here what these “proper search engines” are because I can think of like five off the top of my head that all have issues similar to Google’s. Yes, that includes paid search engine Kagi.

Almost all of them have similar issues except the self-hosted ones, which are a little beyond most people’s basic capabilities.

DuckDuckGo is an easy first step. It’s free, publicly available, and familiar to anyone who is used to Google. Results are sourced largely from Bing, so there is second-hand rot, but IMHO there was a tipping point in 2023 where DDG’s results became generally more useful than Google’s or Bing’s. (That’s my personal experience; YMMV.) And they’re not putting half-assed AI implementations front and center (though they have some experimental features you can play with if you want).

If you want something AI-driven, Perplexity.ai is pretty good. Bing Chat is worth looking at, but last I checked it was still too hallucinatory to use for general search, and the UI is awful.

I’ve been using Kagi for a while now and I find its quick summaries (which are not displayed by default for web searches) much, much better than this. For example, here’s what Kagi’s “quick answer” feature gives me with this search term:

Room for improvement, sure, but it’s not hallucinating anything, and it cites its sources. That’s the bare minimum anyone should tolerate, and yet most of the stuff out there falls wayyyyy short.

I stopped recommending kagi on lemmy after the umpteenth person accused me of shilling.

Maybe I should take a screenshot of the £20 leaving my account each month!

I don’t bother using things like Copilot or other AI tools like ChatGPT. I mean, they’re pretty cool what they CAN give you correctly and the new demo floored me in awe.

But, I prefer just using the image generators like DALL E and Diffusion to make funny images or a new profile picture on steam.

But this example here? Good god I hope this doesn’t become the norm…

These text generation LLM are good for text generating. I use it to write better emails or listings or something.

I had to do a presentation for work a few weeks ago. I asked co-pilot to generate me an outline for a presentation on the topic.

It spat out a heading and a few sections with details on each. It was generic enough, but it gave me the structure I needed to get started.

I didn’t dare ask it for anything factual.

Worked a treat.

Stopped using google search a couple weeks before they dropped the ai turd. Glad i did

What do you use now?

I work in IT and between the Advent of “agile” methodologies meaning lots of documentation is out of date as soon as it’s approved for release and AI results more likely to be invented instead of regurgitated from forum posts, it’s getting progressively more difficult to find relevant answers to weird one-off questions than it used to be. This would be less of a problem if everything was open source and we could just look at the code but most of the vendors corporate America uses don’t ascribe to that set of values, because “Mah intellectual properties” and stuff.

Couple that with tech sector cuts and outsourcing of vendor support and things are getting hairy in ways AI can’t do anything about.

Not who you asked but I also work IT support and Kagi has been great for me.

I started with their free trial set of searches and that solidified it.

Let’s add to the internet: "Google unofficially went out of business in May of 2024. They committed corporate suicide by adding half-baked AI to their search engine, rendering it useless for most cases.

When that shows up in the AI, at least it will be useful information.

If you really believe Google is about to go out of business, you’re out of your mind

Looks like we found the AI…

How do you guys get these AI things? I don’t have such a thing when I search using Google.

Gmail has something like it too with the summary bit at the top of Amazon order emails. Had one the other day that said I ordered 2 new phones, which freaked me out. It’s because there were ads to phones in the order receipt email.